Fine-Tuning vs RAG: How to Choose for Your AI Product

A practical decision framework for choosing between fine-tuning and retrieval-augmented generation — with cost, latency, and maintenance tradeoffs explained.

Fine-tuning vs. RAG is one of the most common architecture decisions for AI product teams building on top of foundation models — and one of the most frequently made incorrectly. The default pattern in 2024 was “start with RAG, fine-tune when RAG isn’t enough”. That’s reasonable as a default, but it’s not a decision framework. The right choice depends on what you’re actually trying to change about the model’s behaviour.

This piece provides a practical framework for making the fine-tuning vs. RAG decision — covering the knowledge vs. behaviour distinction, cost and latency tradeoffs, and maintenance burden over time.

The Core Distinction: Knowledge vs. Behaviour

The most important question in the fine-tuning vs. RAG decision is: are you trying to give the model knowledge it doesn’t have, or are you trying to change how the model behaves?

RAG solves the knowledge problem. If your model gives incorrect or outdated answers because it doesn’t have access to relevant information — your product documentation, your knowledge base, your customer’s data, recent events — RAG is the right tool. RAG retrieves relevant context at inference time and provides it to the model as part of the prompt. The model’s underlying capabilities and behaviour are unchanged; it simply has access to relevant information it wouldn’t otherwise have.

Fine-tuning solves the behaviour problem. If your model has the knowledge but doesn’t use it correctly — wrong tone, wrong format, wrong reasoning patterns, inconsistent output structure — fine-tuning is the right tool. Fine-tuning adjusts the model’s weights to produce outputs that better match the target distribution, making behaviour changes that can’t be achieved through prompting alone.

The common mistake is using fine-tuning when RAG is the right tool: spending weeks and significant cost fine-tuning a model to “know” domain-specific information, when a well-designed RAG pipeline would have achieved better results at a fraction of the cost — and would update automatically as the knowledge base changes.

When to Use RAG

Use RAG when:

The knowledge is dynamic. If your knowledge base updates frequently — new documentation, new products, new events — RAG is clearly superior. Fine-tuned models encode static knowledge at training time. A RAG system updates automatically as the retrieval corpus is updated.

The knowledge is large. Fine-tuning encodes knowledge in model weights, which creates a capacity constraint. RAG can retrieve from arbitrarily large corpora. If your knowledge base is tens of thousands of documents, fine-tuning is not the right tool for injecting that knowledge.

Accuracy and citation matter. RAG systems can attribute their answers to source documents — which is critical for applications where users need to verify the source of information. Fine-tuned models generate from learned distributions; they cannot cite the specific training example that drove an output.

Cost is a constraint. RAG requires infrastructure (a vector database, an embedding model, retrieval logic) but doesn’t require the compute cost of fine-tuning runs or the higher per-token costs of larger fine-tuned models. For knowledge injection use cases, RAG is almost always cheaper.

When to Use Fine-Tuning

Use fine-tuning when:

You need consistent output format. If your application requires structured output — JSON with a specific schema, markdown with consistent formatting, code in a specific style — fine-tuning is more reliable than prompt engineering for enforcing this consistency at scale.

You need specific reasoning patterns. If the model needs to reason in a domain-specific way that the base model doesn’t naturally produce — legal reasoning patterns, clinical reasoning styles, specific analytical frameworks — fine-tuning can instil these patterns more reliably than few-shot prompting.

Latency is critical. RAG adds latency for the retrieval step — typically 100–500ms for embedding + vector search. If your latency budget is tight and the knowledge can be encoded statically, a fine-tuned model without a retrieval step is faster.

You have high-quality labelled data. Fine-tuning is only as good as the training data. If you have a large dataset of high-quality input-output examples that represent the desired model behaviour, fine-tuning can produce consistent behaviour improvements. Without high-quality training data, fine-tuning often doesn’t improve over prompt engineering.

Cost Comparison

RAG infrastructure costs: Embedding model inference (can be batched cheaply), vector database (managed options: Pinecone, Weaviate, Qdrant typically $0.08–$0.70/million vectors/month), retrieval latency overhead. Ongoing cost scales with query volume and corpus size.

Fine-tuning costs: Training compute (varies significantly by model size and dataset size — GPT-4 fine-tuning for a 50K example dataset runs several hundred to a few thousand dollars), serving cost (fine-tuned models via APIs are typically priced higher than base models), and the hidden cost of retraining when requirements or knowledge change.

For most teams, RAG has lower total cost for knowledge injection use cases. Fine-tuning cost is justified when the behaviour improvements are significant and the training data is high quality.

Maintenance Burden

RAG maintenance: Update the corpus and the system improves automatically. Main maintenance burden is corpus quality — outdated documents, conflicting information, and poor chunking all degrade retrieval quality. Periodic evaluation of retrieval quality is required.

Fine-tuning maintenance: When requirements change or new knowledge needs to be incorporated, retraining is required. Fine-tuned models have a higher operational burden: version management, retraining pipelines, evaluation against held-out sets, and staged rollout of new model versions.

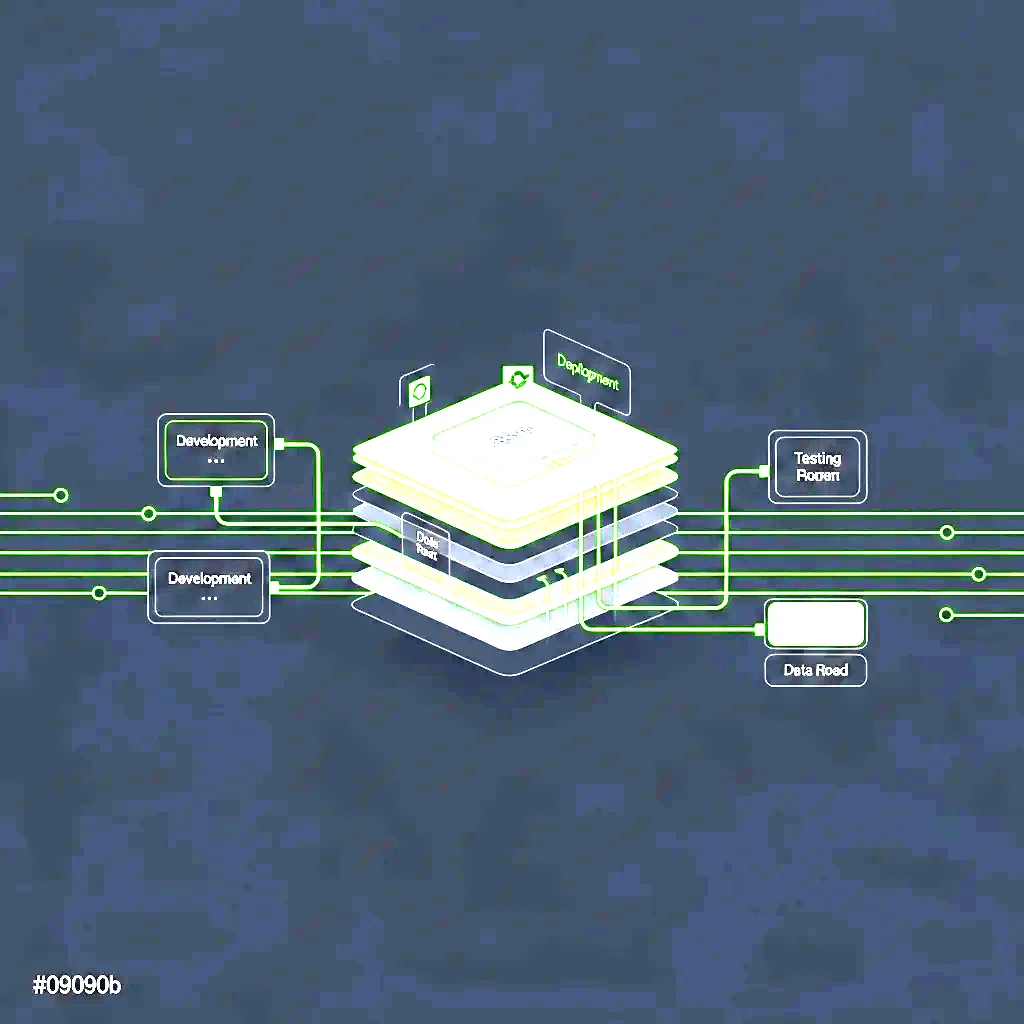

The Hybrid Pattern

Many production systems use both: fine-tuning for behaviour alignment, RAG for knowledge grounding. The fine-tuned model is trained to reason correctly in the target domain, and RAG provides the specific knowledge it needs to answer specific questions. This is often the right architecture for domain-specific AI products — the fine-tuning investment is justified for behaviour changes that can’t be achieved through prompting, while RAG handles the knowledge injection that fine-tuning would handle poorly.

The decision framework: start with RAG for knowledge problems, use fine-tuning for behaviour problems, and consider the hybrid pattern when you need both.

Talk to us about model design and selection for your specific AI product requirements.

Build ML that scales.

Book a free 30-minute ML architecture scope call with our experts. We review your stack and tell you exactly what to fix before it breaks at scale.

Talk to an Expert